What am I missing or how can I leverage a requirements. In other words, I am doing something wrong and the PythonVirtualenvOperator is not properly handling my requirements.txt file. This seems to indicate that PythonVirtualenvOperator is treating my requirements param like a list instead of a string. Neither 'setup.py' nor 'pyproject.toml' found. The commands to get Airflow up and running could be baked into the image but this will be part of the training, so we prefer to leave them out and do it it manually. t x tĮRROR: Directory '/' is not installable. Voila Airflow running in a Docker desktop. However, when the task runs, I quickly receive an error: Executing cmd: /tmp/venvfn63d圓c/bin/pip install m o d u l e s / m o n d a y / r e q u i r e m e n t s. If you open the Docker file, you will see that it involves installing Airflow, as stated in the requirements.txt file: RUN pip3 install -upgrade. Using airflow user (docs: RUN pip install -no-cache-dir -r /tmp/requirements. Switch back to Airflow USER airflow COPY requirements.txt. USER root Copying Airflow requirements USER airflow COPY requirements.txt /tmp/requirements.txt Installing requirements. This seems to fit the implementation described in GitHub, because requirements is a string, not a list, and it complies with the *.txt template. If your CI/CD system caches Docker layers, this could be in a separate Docker statement.

Sync_board_items(board_id=XXXX, table=XXXX) The task I'd like to reference requirements.txt for is defined like so: sync_board_items():įrom import sync_board_items I am seeking guidance on how to properly utilize a requirements.txt file with Airflow's PythonVirtualenvOperator. However, I am unable to properly utilize that parameter. Options can be set as string or using the constants defined in the static class Airflow 2.2.3, PythonVirtualenvOperator was updated to allow templated requirements.txt files in the requirements parameter. mnt/airflow/plugins:/opt/airflow/plugins plugins - you can put your custom plugins here.Īirflow image contains almost enough PIP packages for operating, but we still need to install extra packages such as clickhouse-driver, pandahouse and apache-airflow-providers-slack.Īirflow from 2.1.1 supports ENV _PIP_ADDITIONAL_REQUIREMENTS to add additional requirements when starting all containersĪIRFLOW_CORE_DAGS_ARE_PAUSED_AT_CREATION: 'true'ĪIRFLOW_API_AUTH_BACKEND: '.basic_auth'ĪIRFLOW_CONN_RDB_CONN: 'pandahouse=0.2.7 clickhouse-driver=0.2.1 apache-airflow-providers-slack' logs - contains logs from task execution and scheduler. Some directories in the container are mounted, which means that their contents are synchronized between the services and persistent. redis - The redis - broker that forwards messages from scheduler to worker.

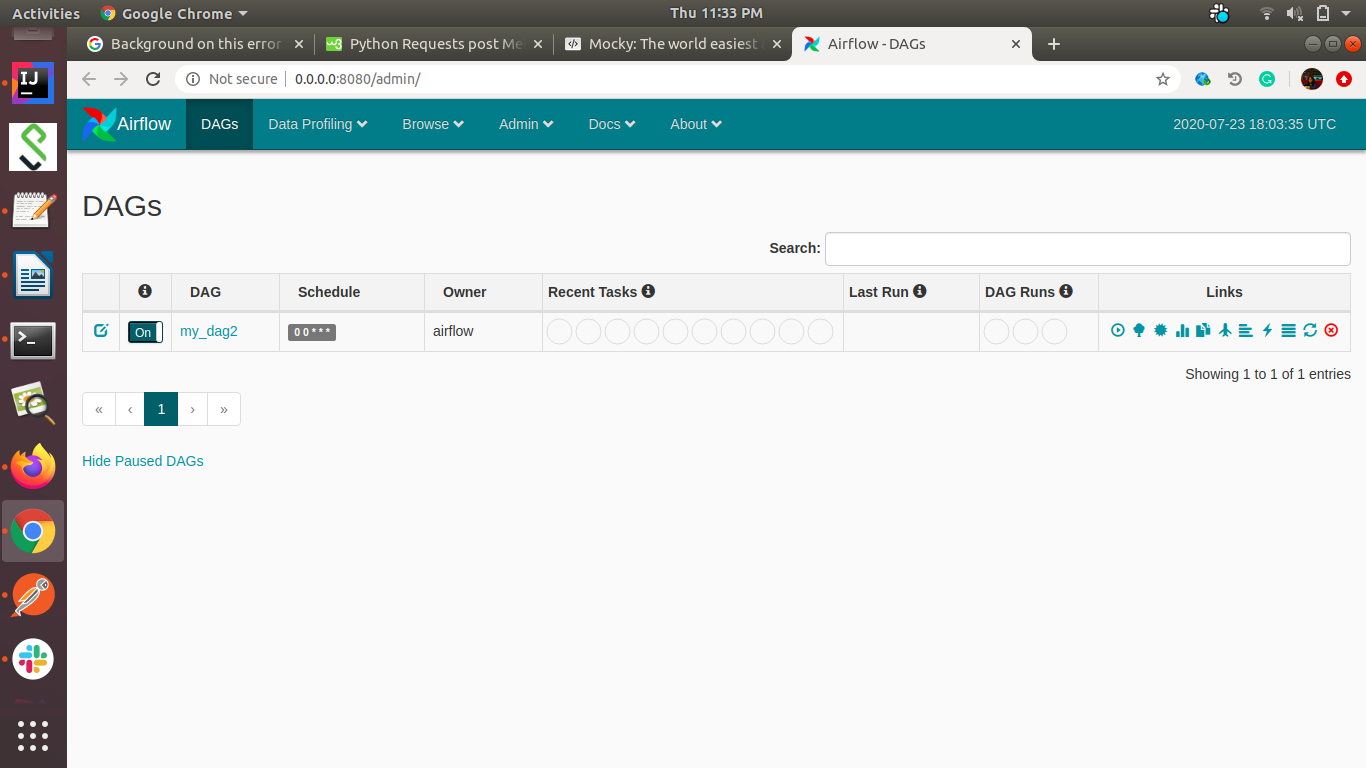

It is available at - postgres - The database. flower - The flower app for monitoring the environment. airflow-init - The initialization service. airflow-webserver - The webserver available at - airflow-worker - The worker that executes the tasks given by the scheduler. Linux/Ubuntu: Install Docker Compose and Install Docker Engine. airflow-scheduler - The scheduler monitors all tasks and DAGs, then triggers the task instances once their dependencies are complete. Prerequisites macOS: Install Docker Desktop. The docker-compose.yaml contains several service definitions: Understand airflow parameters in airflow.models Project description Apache Airflow Apache Airflow (or simply Airflow) is a platform to programmatically author, schedule, and monitor workflows.Persistent airflow log, dags, and plugins.For quick set up and start learning Apache Airflow, we will deploy airflow using docker-compose and running on AWS EC2

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed